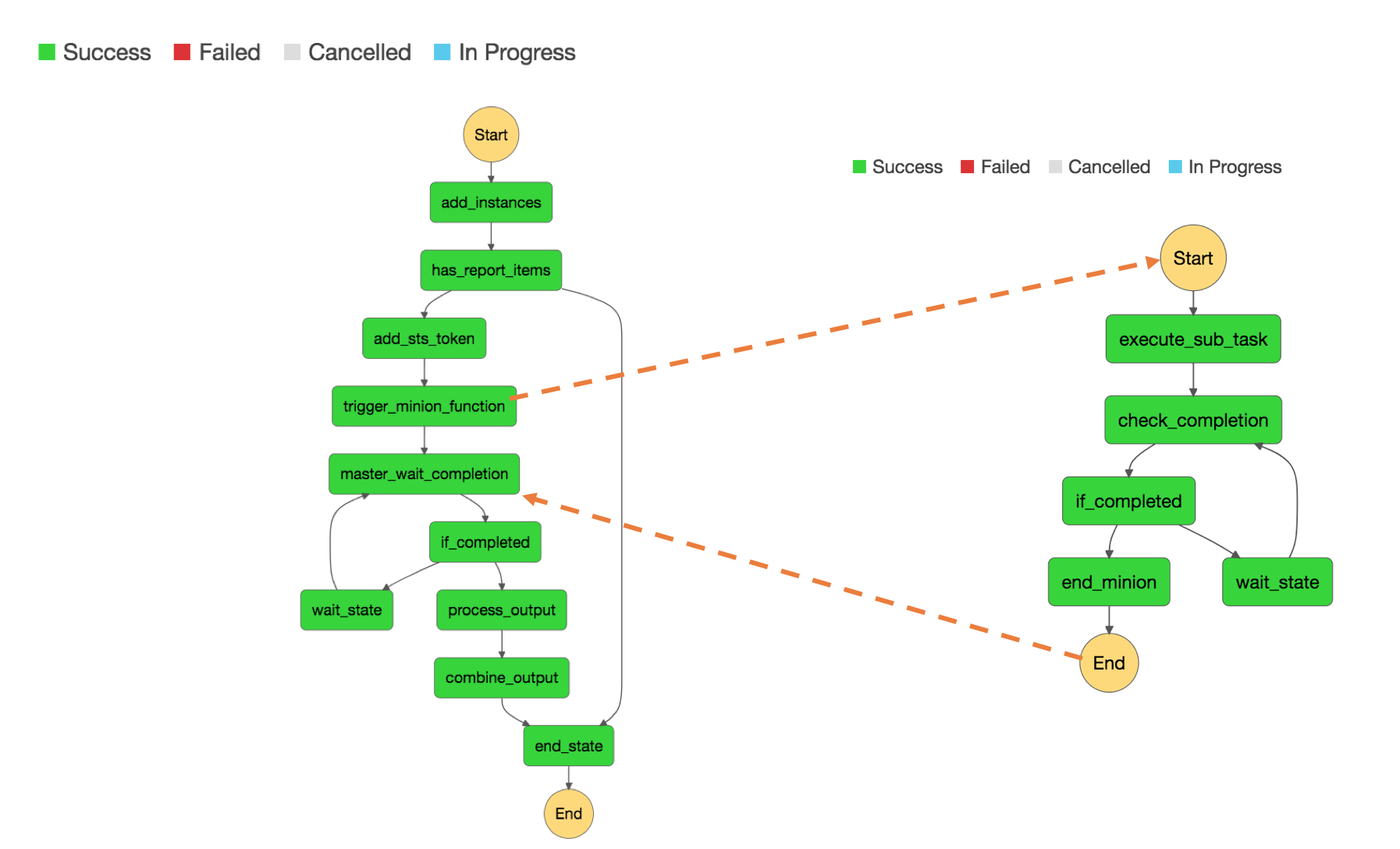

I am currently building an reporting and ops management using Amazon Systems Manager. The solution is simple [ Step Functions, Lambda, Systems Manager - Run Command, EC2, S3].

I was amazed by what you can achieve using Step Functions, you can build, manage and operate a pure micro-services [or nano services]. Combining Step Functions with Lambda is a lethal combination. FYI - API Gateway can use a Step Function as a backend.

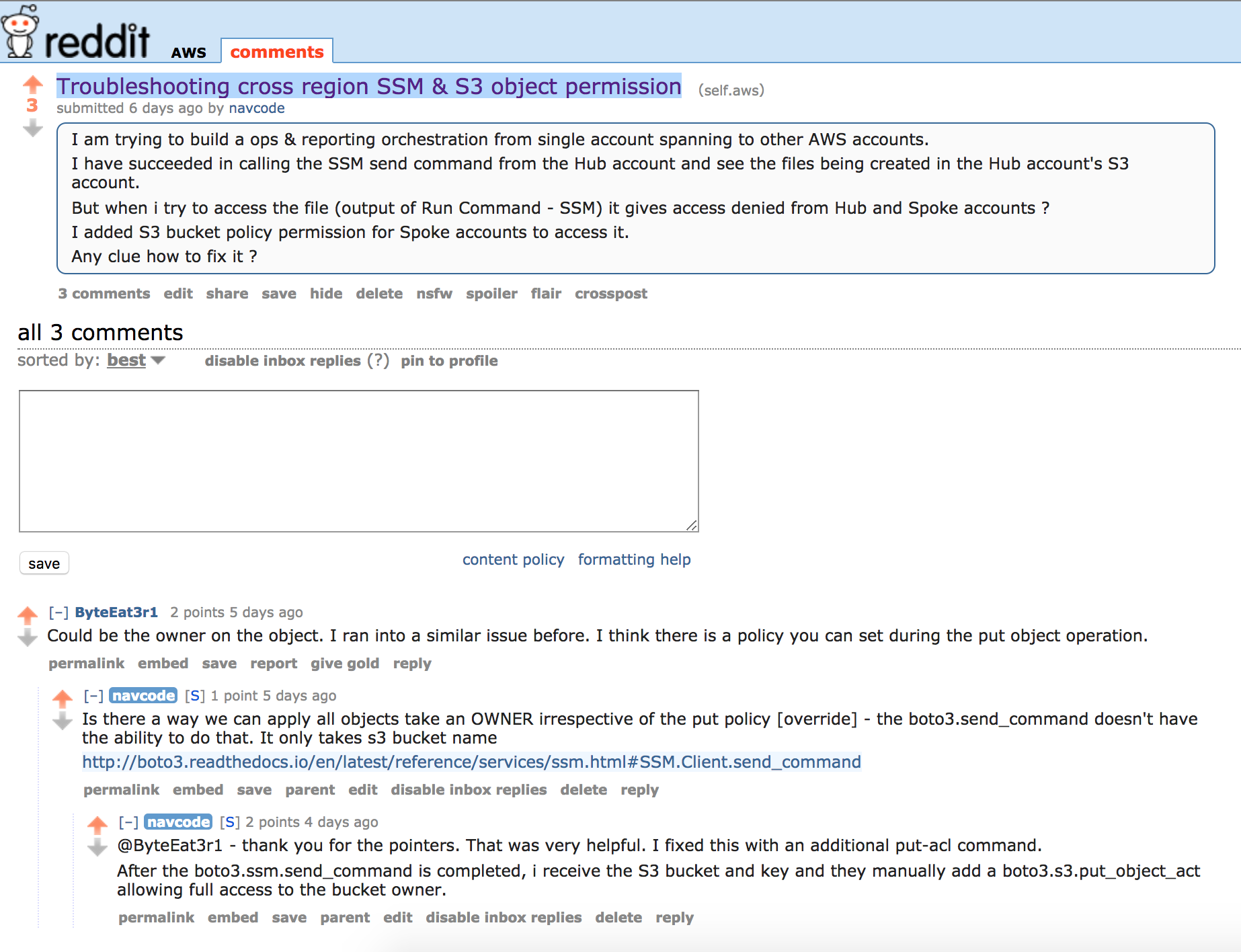

The solution worked really well for the same account. It was an awesome sight to see Step Function orchestrating and coordinating each step and at last SSM Run Command dumping the results on the S3. I was able to do the same for the cross-account [Hub Account] calls as well and see the files in the S3 [source AWS account] - except that when I access the output - I was prompted with an error message.

I went to /r/AWS SubReddit to get some help and that’s where I picked up the solution.

It is clear that it is an issue with Bucket Owner or ACL issues in S3. Currently, the boto3 API doesn’t accept parameters to specify an S3 object policy or a way to deal with the permission issue.

I tried all sorts of things like having a separate S3 account and doing S3 bucket replication, S3 object copy, listening to S3 events and manually set the bucket policy etc. Few of the above work, but they are not effective for sure.

The obvious solution of course was right there. The boto3.ssm.send_command returns the S3 Key which was used and we already know the S3 bucket - so right after you receive the send_command has succeeded - send another aws.s3.put_object_acl

aws.ssm.execute_ssm(script_location, 'i-12345')

#wait ...

response = aws.get_ssm_status(run_command_id, 'i-12345')

s3_key = response["output_url"].split(S3_BUCKET)[-1][1:]

aws.s3.put_object_acl(ACL='bucket-owner-full-control', Bucket=S3_BUCKET, Key=s3_key)

Just to give you a background - I did a master - minion setup to orchestrate the functions across multiple accounts.

Share this post

Twitter

Google+

Facebook

Reddit

LinkedIn

StumbleUpon

Pinterest

Email